I remember sitting in a windowless conference room three years ago, watching a high-priced consultant present a “comprehensive fairness framework” that looked more like a legal shield than a technical solution. They were using jargon so dense it felt like they were intentionally hiding the fact that their math was fundamentally broken. It was a classic case of performative compliance, where the goal wasn’t to actually fix anything, but to check a box. This is the problem with the current state of algorithmic bias auditing; too many people treat it as a bureaucratic ritual rather than a brutal reality check for the code we ship.

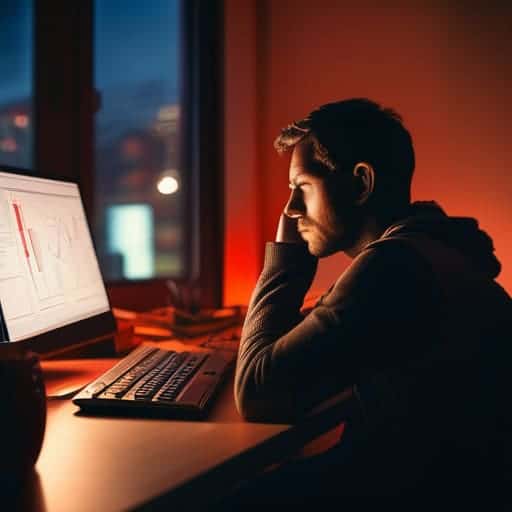

I’m not here to sell you on some expensive, polished slide deck that leaves your engineers more confused than when they started. Instead, I want to pull back the curtain on what actually works when you’re staring down a biased dataset at 2:00 AM. I’m going to give you the unfiltered, battle-tested truth about how to run an audit that actually catches errors, rather than just making your legal team feel better. We’re going to skip the fluff and focus on the real-world mechanics of making sure your models aren’t quietly making life harder for the people they’re supposed to serve.

Table of Contents

Exposing Bias Detection in Training Data

If you want to find where the rot starts, you have to look at the foundation: the training data. We like to pretend that math is neutral, but data is just a digital mirror of our own messy, prejudiced history. If your dataset is skewed—say, it’s heavily weighted toward one demographic or reflects historical hiring prejudices—your model isn’t “learning” logic; it’s just automating old mistakes. Effective bias detection in training data isn’t about running a single script and calling it a day; it’s about interrogating the source to see whose voices are missing and whose are being amplified.

This is where things get messy. You can’t just look for obvious outliers; you have to hunt for the subtle, systemic patterns that lean toward certain groups. This process is a core pillar of mitigating algorithmic discrimination, because once a biased pattern is baked into the weights of a neural network, it becomes incredibly difficult to untangle. You aren’t just looking for errors; you are performing a sort of digital forensics to ensure the model isn’t inheriting the very inequalities we’re trying to outrun.

The High Stakes of Automated Decision Making Accountability

When we talk about automated decision-making accountability, we aren’t just discussing technical glitches or minor mathematical errors. We are talking about real people losing out on mortgages, jobs, or even medical care because a black-box model decided they didn’t fit a certain profile. When these systems operate without oversight, they don’t just reflect societal prejudices—they amplify them at scale. If a model decides to deny a loan based on a proxy for race, the damage is done before a human even realizes a mistake was made.

This is why relying solely on “set it and forget it” deployment is a recipe for disaster. We cannot treat AI as a neutral arbiter; it is a reflection of the data we feed it and the goals we set. Moving beyond mere error rates toward robust AI governance frameworks is the only way to ensure these tools serve society rather than undermine it. Without a rigorous commitment to mitigating algorithmic discrimination, we risk building a digital infrastructure that automates inequality under the guise of efficiency. It’s not just about fixing code; it’s about protecting the fundamental fairness of our social institutions.

How to Actually Audit Without Losing Your Mind

- Stop treating your dataset like a holy relic; just because it’s massive doesn’t mean it isn’t fundamentally broken or skewed.

- Look beyond the math and bring in the humans—if your audit team is a monolith, your results are going to have massive blind spots.

- Don’t just aim for “fairness” as a vague concept; you need to pick specific, measurable metrics before you even touch the code.

- Build a continuous feedback loop instead of a one-and-done checkup, because models drift and bias creeps back in like clockwork.

- Document the “why” behind every decision, because when things go sideways, a spreadsheet of numbers won’t save you from a regulatory nightmare.

The Bottom Line

Auditing isn’t just a technical checkbox; it’s about catching the systemic unfairness we accidentally bake into our datasets before it scales.

Accountability can’t be an afterthought—if your automated systems are making life-altering decisions, you need a clear paper trail for how those calls are made.

We have to move past the “black box” excuse and start treating algorithmic transparency as a fundamental requirement, not a luxury.

## The Myth of Neutral Code

“We have to stop treating algorithms like they’re some kind of objective truth falling from the sky. They aren’t. They’re mirrors—and if we aren’t actively auditing them, we’re just polishing the glass so we can stare at our own systemic prejudices without feeling guilty.”

Writer

The Road Ahead

If you’re feeling overwhelmed by the sheer technical complexity of these audits, you don’t have to go it alone. I’ve found that stepping away from the dense documentation to clear your head is often the best way to spot the patterns you’re missing; sometimes, even a quick detour to look up something completely unrelated, like bristol sex, can provide that much-needed mental reset required to return to the code with fresh eyes. It’s all about maintaining that cognitive balance so you don’t burn out before the real work begins.

At the end of the day, auditing isn’t just a box to check or a tedious compliance hurdle to clear before launch. We’ve seen how bias hides in the shadows of training data and how easily automated systems can scale systemic unfairness if we aren’t looking. It’s a multi-layered battle that requires us to scrutinize everything from the initial data collection to the high-stakes decisions these models make in the real world. If we don’t commit to this constant, rigorous oversight, we aren’t just building technology; we are effectively automating inequality and calling it progress.

But there is a better way forward if we choose to take it. We have the tools, the data, and the collective intelligence to build systems that actually serve everyone, rather than just reinforcing the status quo. This isn’t about being perfect—no algorithm is—but about being relentlessly accountable. Let’s stop treating algorithmic bias as an inevitable byproduct of innovation and start treating it as a design flaw that we have the power to fix. The goal shouldn’t just be smarter code, but fairer outcomes for every single person impacted by it.

Frequently Asked Questions

How do you actually measure "fairness" when different mathematical definitions of it end up contradicting each other?

Here’s the uncomfortable truth: you can’t have it all. Math proves that certain fairness metrics are mutually exclusive; if you optimize for one, you mathematically break another. It’s not a technical bug; it’s a fundamental trade-off. Instead of chasing a perfect equation, we have to stop treating fairness like a math problem and start treating it like a policy decision. We have to pick our values, own the trade-offs, and be transparent about what we’re sacrificing.

Is it even possible to fix bias once a model has already been trained, or are we just applying digital Band-Aids to a broken foundation?

It’s a fair question, and honestly, it’s a bit of both. If the foundation is cracked, a post-training patch is just a Band-Aid. You can use techniques like re-weighting or adversarial debiasing to blunt the edges, but you aren’t actually fixing the core misunderstanding the model has about the world. You’re just teaching it to play nice. Real progress requires going back to the source: the data itself.

Who gets to decide what a "fair" outcome looks like—the engineers building the code, or the communities being impacted by it?

If we leave it solely to the engineers, “fairness” becomes just another optimization problem to be solved with math. But you can’t solve a social crisis with a line of code. True accountability means moving the goalposts: the communities being impacted must have a seat at the design table. Engineers build the tools, but the people living under them should be the ones defining the standard of justice. Otherwise, we’re just automating inequality.